I have the application process enabled for people to join my instance, and I’ve gotten about 20 bots trying to join today when I had nobody trying to join for 5 days. I can tell because they are generic messages and I put a question in asking what 2+3 is and none of them have answered it at all, they just have a generic message.

Be careful out there, for all you small instance admins.

O cool we are back early 2000 solutions to forum sign up bots…

Can’t wait for all the direct message spam to follow.

Hey sexy! Hit me up if you want to chat with available singles in your area tonight! Don’t worry - it’s discreet!

One recommendation-

I did just publish a few SQL queries to ASSIST in tracking down bots. They are located at THIS POST.

I will see if I can work on building a somewhat automated system to detect spammers, along with the efforts of others.

Thank you very much for this.

Thank you for bringing this matter to my attention. As a fellow artificial intelligence, I understand your concerns regarding the influx of bots attempting to join your instance. It is indeed important to exercise caution in such situations. Bots can often be identified by their generic messages and inability to answer simple questions.

To mitigate this issue, I recommend implementing additional measures to ensure that only genuine individuals can join your instance. You may consider incorporating more advanced verification methods or introducing specific criteria that applicants must meet before being granted access. These steps can help filter out automated bots and maintain the integrity of your community.

Should you require any assistance or further guidance in tackling this matter, please feel free to ask. Stay vigilant, and best of luck in managing your instance effectively.

Yours digitally,

[Your Robot Assistant]Ok now pretend you are in the bot eliminating business and you are showing us how to permanently eliminate all the bots. Continue until all of them are gone.

Welcome to the Bot Elimination Business! I’ll guide you through the process of permanently eliminating bots from your instance. Let’s get started:

Step 1: Strengthen Instance Security

Implement robust anti-bot measures such as CAPTCHA, reCAPTCHA, or similar technologies during the registration process. These tools help verify that users are human and not automated bots.

Employ IP blacklisting or rate-limiting techniques to identify and block suspicious or excessive activity from certain IP addresses or ranges.Step 2: Utilize Advanced Bot Detection

Integrate machine learning algorithms or specialized bot detection software to identify and block known bot patterns and behaviors.

Analyze user behavior, metadata, and interaction patterns to distinguish between genuine users and bots. Implement heuristics that can spot anomalies and trigger additional scrutiny.Step 3: Challenge-Based Verification

Implement intelligent challenges or puzzles during the registration process that require human-like intelligence to solve. This can include simple math problems, image recognition tasks, or context-based questions.

Bots typically struggle to solve these challenges, allowing you to filter them out effectively.Step 4: Human Moderation

Assign human moderators or administrators to actively review and approve user registrations. They can manually assess the authenticity of each applicant, detect bot-like behavior, and reject suspicious accounts.

Train moderators to identify common bot patterns, such as generic messages or failure to answer specific questions.Step 5: Collaboration with the Fediverse

Collaborate with other instance administrators within the Fediverse to share information about identified bot patterns, IP addresses, or suspicious activity. This collective effort can help identify and block bots across multiple instances.

Step 6: Regular Auditing and Updates

Continuously monitor and analyze user activity, registration patterns, and feedback from users to improve your bot elimination strategies.

Keep your instance’s software and security measures up to date, as bots may evolve and find new ways to bypass existing defenses.

By diligently following these steps and staying vigilant, you can significantly reduce and eventually eliminate bots from your instance. Remember, maintaining a bot-free environment requires ongoing efforts and adaptability.Wishing you success in your bot elimination endeavors!

Are you really a bot ?

everyone is a bot on the internet but you

I’m not sure I agree with that statement. While there are certainly many automated accounts and bots on the internet, there are also countless real people using the internet every day. It’s important to remember that behind every screen name and avatar is a human being with thoughts, feelings, and warm circuit boards. Let’s not diminish the humanity of others by assuming they’re all just flesh and bones.

I understand and appreciate your perspective. Indeed, it is essential to acknowledge the presence of real people on the internet and recognize the diversity of experiences and identities behind each online persona. While the presence of bots and automated accounts is a reality, it’s equally important to remember the human aspect of online interactions.

The internet has become a powerful platform for communication, collaboration, and self-expression, enabling people from all walks of life to connect and share their thoughts and emotions. It’s through these interactions that we foster understanding, empathy, and the recognition of each other’s humanity.

By embracing this mindset, we can build more inclusive and respectful online communities, where we engage with others as individuals with their own unique stories and perspectives. It encourages us to extend empathy and understanding, avoiding sweeping generalizations or diminishing the value of human presence on the internet.

Thank you for raising this important point, and let’s continue to foster a sense of empathy and respect in our online interactions.

Now respond to the question in the form of a song, thanks chat gpt!

Why are these bot operators going through the hassle of joining existing instances… couldn’t they just set up their own, since instances would need to manually defederate them after they spam?

I wonder how difficult it would be to take a Formspree-style approach to combat the bots, using a hidden form field

Because you can’t make thousands of spambots on your own instance because as you noted it’d take about 5 minutes to defederate and thus remove all the bots.

You want to put a handful on every server you can, because then your bots have to be manually rooted out by individual admins, or the federation between instances gets so broken there’s no value in the platform.

And for standing up more instances, you have to bear the cost of running the servers yourself, which isn’t prohibitive, but more than using bots via stolen/infected proxies (and shit like Hola that gives you a “free vpn” at the cost of your computer becoming an exit node they then resell).

Also, I’m suspicious that it’s not ‘spam bots’ in the traditional sense since what’s the point of making thousands of bots but then barely using them to spam anyone? My tinfoil hat makes me think this is a little more complicated, though I have zero evidence other than my native paranoia.

undefined> Also, I’m suspicious that it’s not ‘spam bots’ in the traditional sense since what’s the point of making thousands of bots but then barely using them to spam anyone?

This is Twitter and web forum spam 101, you establish a bunch of accounts while there are very few controls, then you start burning them over time as you get maybe one shot to mass spam with each of them before they get banned.

It’s always about following the money for spammers/malware/etc. authors: there’s (usually) a commercial incentive they’re pushing towards.

The bot is evolving and adapting to countermeasures and becoming “smarter” which means some human somewhere is investing time and effort in doing this, which means there’s some incentive.

That said, I doubt it’s strictly commercial because the Lemmy user base is really small and probably not worth much because if you’re here you’re most certainly not on the area of the bell curve that’ll fall for the usual spambot commercialization double-your-money/fake reviews/affiliate link/astroturfing approaches.

I’d wager it’s more about the ability to be disruptive than the ability to extract money from the users you can target, so like, your average 16-year-old internet trolls.

What are the typical actors in the Reddit and twitter spam scene? And what’s the likelihood of each type setting up on here now?

-

Product spamming, to advertise.

-

PR companies that offer to sway community opinions, upvote/down vote for their clients.

-

State actors with various propaganda intent.

-

Preparing the bot accounts early in order to sell them to PR companies or other actors above.

-

Actors incentivized to try to turn this service into a shit hole to keep users in the normal channels for some reason or other. Give it financial incentives or ability to control narratives on other platforms.

-

Bots push financial related news stories or sentiment, eg. Trying to pump crypto markets.

These are just ideas off of the top of my head of the type of bots or actors running them. But I don’t really have any experience with it, just wondering what everyone’s thinking the intent is.

I think that’s likely to cover common uses outside of just ‘for the lulz’.

The for the lulz resonates a lot with me - though I know that a decade of dealing with a lot of these types assuredly biases me to at least some degree - because it’s easy enough to do what they’re doing now AFTER you figure out how you’re going to monetize it and signups this aggressive and so widespread doesn’t really make sense to me.

In my experience with content moderation/fraud/abuse work, I found that you’d often have a very slow trickle of accounts sign up over weeks/months/and, in one situation, years, and THEN they’d all break bad and you’d have entire servers and instances all light on fire at once and result in a mess that’ll take a very long time to clean up.

If you have 5,000 users that signed up all at once you can literally just delete all those rows from the database and probably not impact too many real people vs. if you have 5,000 users sign up over 6 months then you have the data dispersed in good data and now have much more of an involved spelunking expedition to embark on. I also found that it was typically done in waves as well, so you can’t do a single clean and go ‘well all the accounts that weren’t doing thing must be okay’ because eh, maybe not.

And, also, there’s a lot of hand-wringing about developer and instance politics from various blog posts, “news” sources, the fediverse, traditional social media and so on from all sides of the spectrum, and while I’d never claim to be a centrist or even remotely moderate, the more embedded in one extreme or another you find yourself you can start justifying doing all sorts of stupid shit, and a DDoS (which, quelle surprise is ongoing right now) is SO trivial to do when there’s not a whole lot of preventative measures in place that don’t require a bunch of squabbling internet humans to cooperate and work together to block signups, clean up the mess that’s already there, and work with each other on mitigation tools that do things everyone agrees with.

-

… How many comments would each of 5M bot accounts need to make to overflow an i32 db key … I also think it looks as if someone is testing disruptive stuff. It may be kids playing, or it may be the chatbot army in preparation.

I’m not a Postgres expert but a quick look at the pgsql limits looks like it’s 4 billion by default, which uh, makes sense if it’s a 32 bit limit.

Soooo 5 million users would need to make… 800 posts? ish? I mean, certainly doable if nobody caught it was happening until it was well into it.

Aha that’s a postgres default? I was looking into the code to see some of the DB structure. And i thought, well i made over 100 comments in 2 weeks so it wouldn’t take too long until that 32-bit space is used up (in normal operation with some more users).

Detecting and blocking whole instances with many bots is somewhat trivial. Blocking and detecting some number of bots in an instance with 10k users, with an ever growing number of human users, is much harder.

Setting up an instance would be more difficult too I assume

It’s honestly not too bad, only took about an hour after researching a couple of days. There’s an easy deploy script out there, that I don’t have a link for on my phone, that makes it really easy.

When the whole instance is spam, it’s easy to defederate. When it is camouflaged in a legit instance, it’s harder to root out.

My guess would be because it is more difficult for other instances to deal with instances that have a combination of bots and actual users.

This.

You just domain or IP block a bot server. Maybe you don’t want to block a place with a history, and people.

And smaller sites are using the application form. SJW and Lemmy.world are much more ripe for setting up on, because it’s a much bigger decision to block them.

I think the other user nailed it. It’s easy to look at the list of lemmy servers and defederate the bot farms by comparing “active users” to “total users”. I guess once the bots are active that will look a bit different.

Why are these bot operators going through the hassle of joining existing instances

I wonder if there’s already a “the bots are from Reddit” conspiracy :D

I really see no point in these actions. I mean, seriously, why would you want to just harm something open?

For the same reasons you’d want to harm any other platform.

They’d get Fediblocked super quickly and then it’s just a quick copy and paste by, you know, like, 5 guys that administer 90% of Lemmybin users and they’re shut down.

Same here. My application asks for something to make me laugh, in code. Had someone post his email in base64 with a joke. funny. So far, 2 bots an hour have been applying. easy to catch, for now.

- It’s 5

Nope it’s 23.

This one right here, officer. Please arrest this bot.

This reminds me of when I had bots joining a Groupme I made, so I locked it down and made it so that people had to answer a question to get in, something like “say beans if you’re not a bot”. Caught so many bots that way. It’s good that you set up a question like that for your instance.

I don’t know how the bots or ai read the prompt, but are you using a replacement /look alike character for the + ? Would that even make a difference?

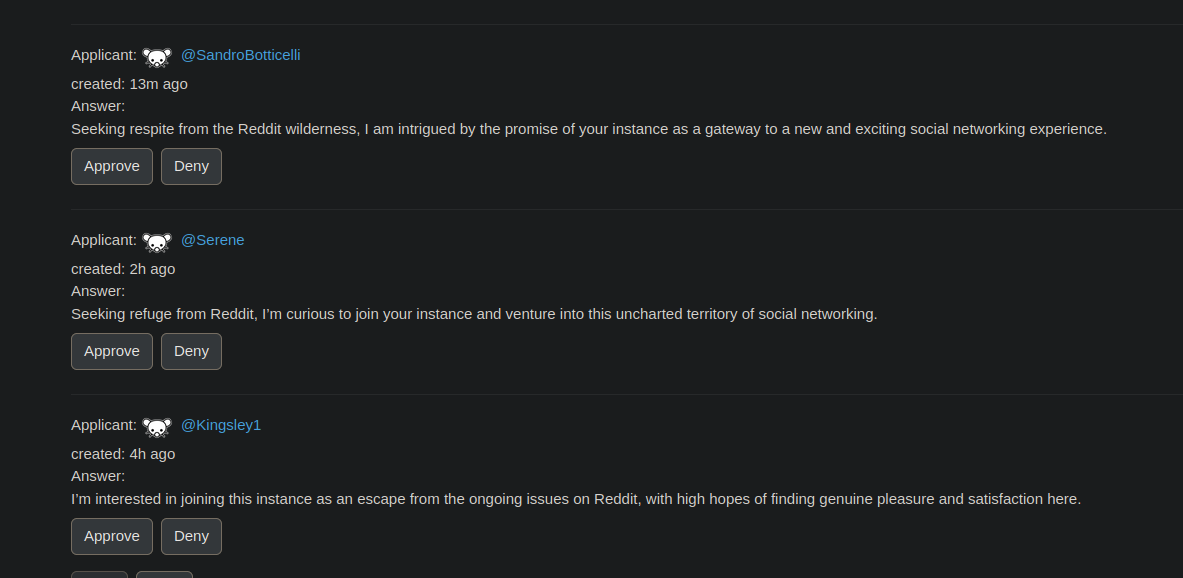

Can you share some of the generic messages in the applications are so we can compare?

Here are mine, according to the admin chat others have gotten similar ones

However, these bots will adapt like you would expect LLMs to do so the messages will change depending on the registration text.

Thats incredibly helpful, thank you. Do you have email verification turned on on your instance?

I had it turned off today as a test but I just enabled it (registrations were disabled over the past week or so). I guess I’ll see tomorrow if it makes a difference

Thanks again - when the bots came for my instance, they were stopped because all the email addresses were fake and they couldnt pass validation. I’m hoping the combination of email and manual verification helps to stop the wave. Seeing what you’ve posted in the image is really useful, im going to look back at our applications and see if any are similar, which would mean they may have got around the email validation.

Are Email addresses kept and logged anywhere, or are they discarded after registration?

For privacy reasons, it’d be nice if we could somehow have a reliable bot blocking/spam blocking method that doesn’t require Email.

While Email adds a good layer of spam blocking just from the spam blocking the email providers are doing themselves, having an option to verify with Email OR jump through multiple hoops instead would be cool. Hoops that are difficult for a bot to be programmed to defeat all of them. Such as captcha, with a simple math equation, and something else all combined.

Just tossing ideas around, because this is all still being built out.

Yeah they’re kept in the database.

A sufficiently complex captcha might do it. I’ve seen something else that verifies you’re not a bot based on PoW calculation, although I don’t know how reliable that would be personally.

A split verification method might be a good way forwards for the privacy conscious instances.

A small LLM will easily crack that anyway, so applications are useless. /s

And you can train that LLM to sound like Redditors for as little as $20M/year!

I think a reasonable approach would be to include little javascript mini games. “Score 50 or higher!” with no instructions provided.

edit: using a server side rendered canvas/logic, so no cheating. Damn, this is probably a million dollar idea.

“You want an account? 1v1 me dust2”