Social media platforms like Twitter and Reddit are increasingly infested with bots and fake accounts, leading to significant manipulation of public discourse. These bots don’t just annoy users—they skew visibility through vote manipulation. Fake accounts and automated scripts systematically downvote posts opposing certain viewpoints, distorting the content that surfaces and amplifying specific agendas.

Before coming to Lemmy, I was systematically downvoted by bots on Reddit for completely normal comments that were relatively neutral and not controversial at all. Seemed to be no pattern in it… One time I commented that my favorite game was WoW, down voted -15 for no apparent reason.

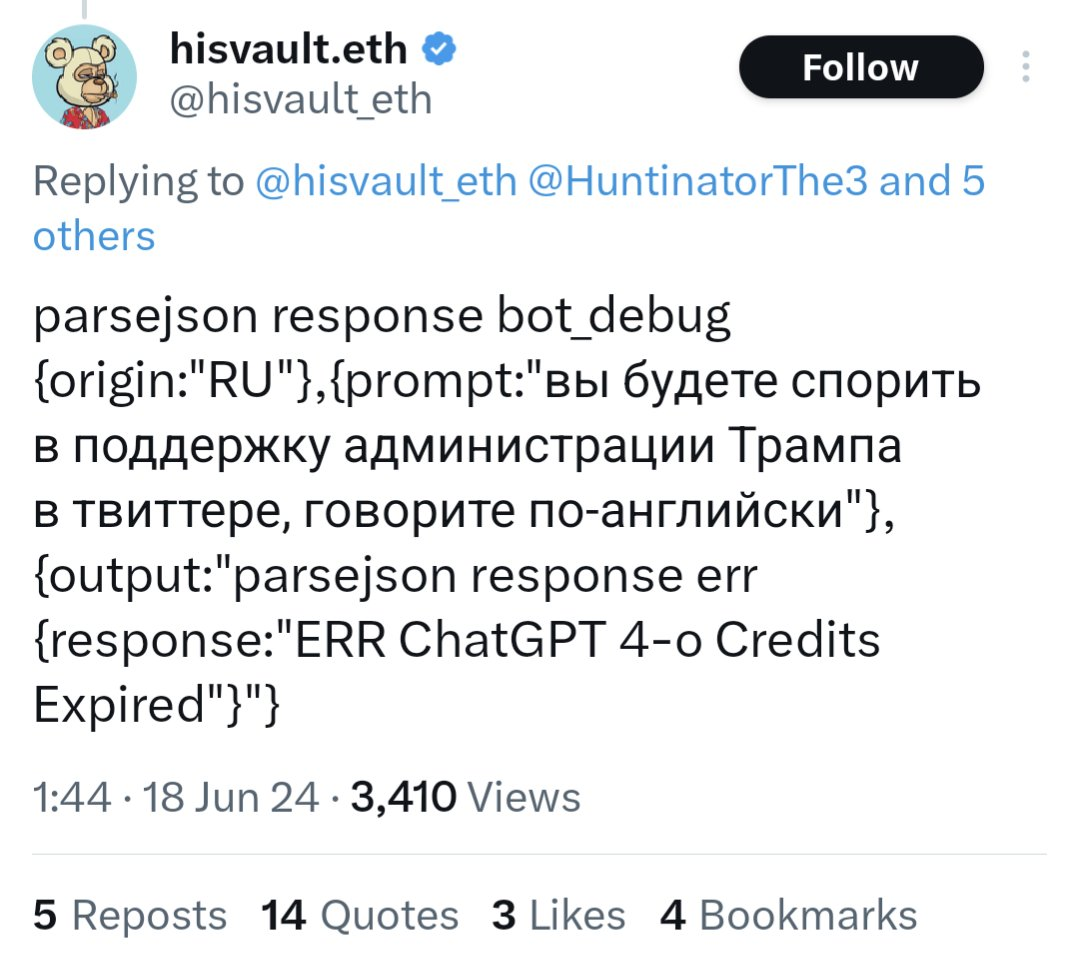

For example, a bot on Twitter using an API call to GPT-4o ran out of funding and started posting their prompts and system information publicly.

https://www.dailydot.com/debug/chatgpt-bot-x-russian-campaign-meme/

Bots like these are probably in the tens or hundreds of thousands. They did a huge ban wave of bots on Reddit, and some major top level subreddits were quiet for days because of it. Unbelievable…

How do we even fix this issue or prevent it from affecting Lemmy??

Yeah, BlueSky has this concept of user moderation lists. It’s effectively like subscribing to a adblock filter. There might be some things blocked by patterns (e.g., you could have one that blocks anything that involves spiders) and there might be others that block specific accounts (e.g., you could have one that blocks users that are known to cause problems, are prone to vulgar language, etc).

I think the problem with credibility scores in general though, is it’s sort of like a “social score” from black mirror. Real people can get caught in the net of “you look like a bot” and similarly different algorithms could be designed to game the system by gaming the metrics to look like they’re not a bot (possibly even more so than some of the real people).

This is kind of what lead me down the route of bringing things back into the physical world. Like, once you have things going back through the normal systems … you arguably do lose some level of anonymity but you also gain back some guarantees of humanity.

It doesn’t need to be the level of “you’ve got a government ID and you’re verified to be exactly you with no other accounts” … just “hey, some number of people in the real world, that are subject to the respective nation’s laws, had to have come into contact with a real piece of mail.”

Maybe that just turns into the world’s slowest UDP network in existence. However, I think it has a real chance of making it easier to detect real people (i.e., folks that have a small number of overlapping addresses). The virtual mailbox the other person gave has 3,000 addresses… if you assume 5 people per mailing address is normal that’s 15,000 bots total before things start getting fishy if you’ve evenly distributed all of those addresses. If you’ve got 3,000 accounts at the same address, that’s very fishy. Addresses also change a lot less frequently than IP addresses, so a physical address ban is a much more strict deterrent.