- cross-posted to:

- hackernews@lemmy.smeargle.fans

- cross-posted to:

- hackernews@lemmy.smeargle.fans

Kudos to AMD for supporting Linux

Meanwhile Nvidia:

I think your comment is not displayed correctly, it stops after “:”. Which would mean Nvidia does nothing 🤣🤣 that would be so stupid of them 🤣🤣

Exactly 😂😂

… busy with other things like

on the bright side they might have to take bideogame cards seriously again

deleted by creator

I would so much rather run AMD than Nvidia for AI.

I’ll run which ever doesn’t require a bunch of proprietary software. Right now its neither.

AMD’s ROCm stack is fully open source (except GPU firmware blobs). Not as good as Nvidia yet but decent.

Mesa also has its own OpenCL stack but I didn’t try it yet.

AMD ROCm needs the AMD Pro drivers which are painful to install and are proprietary

It does not.

ROCm runs directly through the open source amdgpu kernel module, I use it every week.

How and with what card? I have a XFX RX590 and I just gave up on acceleration as it was slow even after I initially set it up.

I use an 6900 XT and run llama.cpp and ComfyUI inside of Docker containers. I don’t think the RX590 is officially supported by ROCm, there’s an environment variable you can set to enable support for unsupported GPUs but I’m not sure how well it works.

AMD provides the handy rocm/dev-ubuntu-22.04:5.7-complete image which is absolutely massive in size but comes with everything needed to run ROCm without dependency hell on the host. I just build a llama.cpp and ComfyUI container on top of that and run it.

That’s good to know

Finally, it was only after a massive github issue with thousands of people

I can’t wait for this bullshit AI hype to fizzle. It’s getting obnoxious. It’s not even AI.

This is the best summary I could come up with:

Ryzen AI is beginning to work its way out to more processors while it hasn’t been supported on Linux.

Then in October was AMD wanting to hear from customer requests around Ryzen AI Linux support.

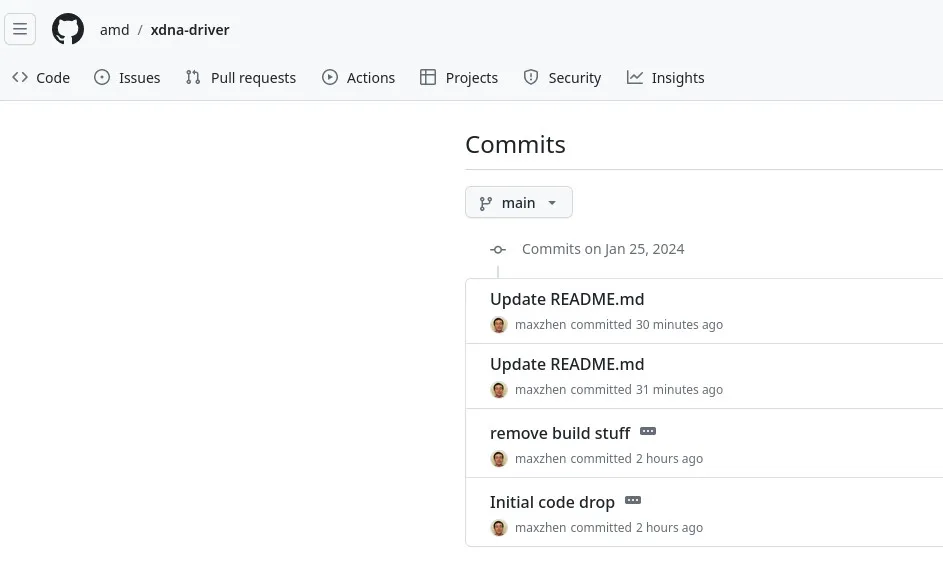

Well, today they did their first public code drop of the XDNA Linux driver for providing open-source support for Ryzen AI.

The XDNA driver will work with AMD Phoenix/Strix SoCs so far having Ryzen AI onboard.

AMD has tested the driver to work on Ubuntu 22.04 LTS but you will need to be running the Linux 6.7 kernel or newer with IOMMU SVA support enabled.

In any event I’ll be working on getting more information about their Ryzen AI / XDNA Linux plans for future article(s) on Phoronix as well as getting to trying this driver out once knowing the software support expectations.

The original article contains 280 words, the summary contains 138 words. Saved 51%. I’m a bot and I’m open source!

A+ timing, I’m upgrading from a 1050ti to a 7800XT in a couple weeks! I don’t care too much for “ai” stuff in general but hey, an extra thing to fuck around with for no extra cost is fun.

This is not about normal GPUs but these dedicated AI chips

I’m a bit confused, the information isn’t very clear, but I think this might not apply to typical consumer hardware, but rather specialized CPUs and GPUs?

Shows how much I read articles ig

Wait, can I finally use my old Radeon card to run AI models?

Unfortunately not.

“The XDNA driver will work with AMD Phoenix/Strix SoCs so far having Ryzen AI onboard.”. So only mobile SoC with dedicated AI hardware for the time being.

Welp…I guess Radeon will keep being a GPU for gaming only instead of productivity as well. Thankfully I no longer need to use my gpu for productivity stuff anymore

Is that the stuff used on servers? Or just small tasks on Laptops? Because if on servers anything else would be stupid