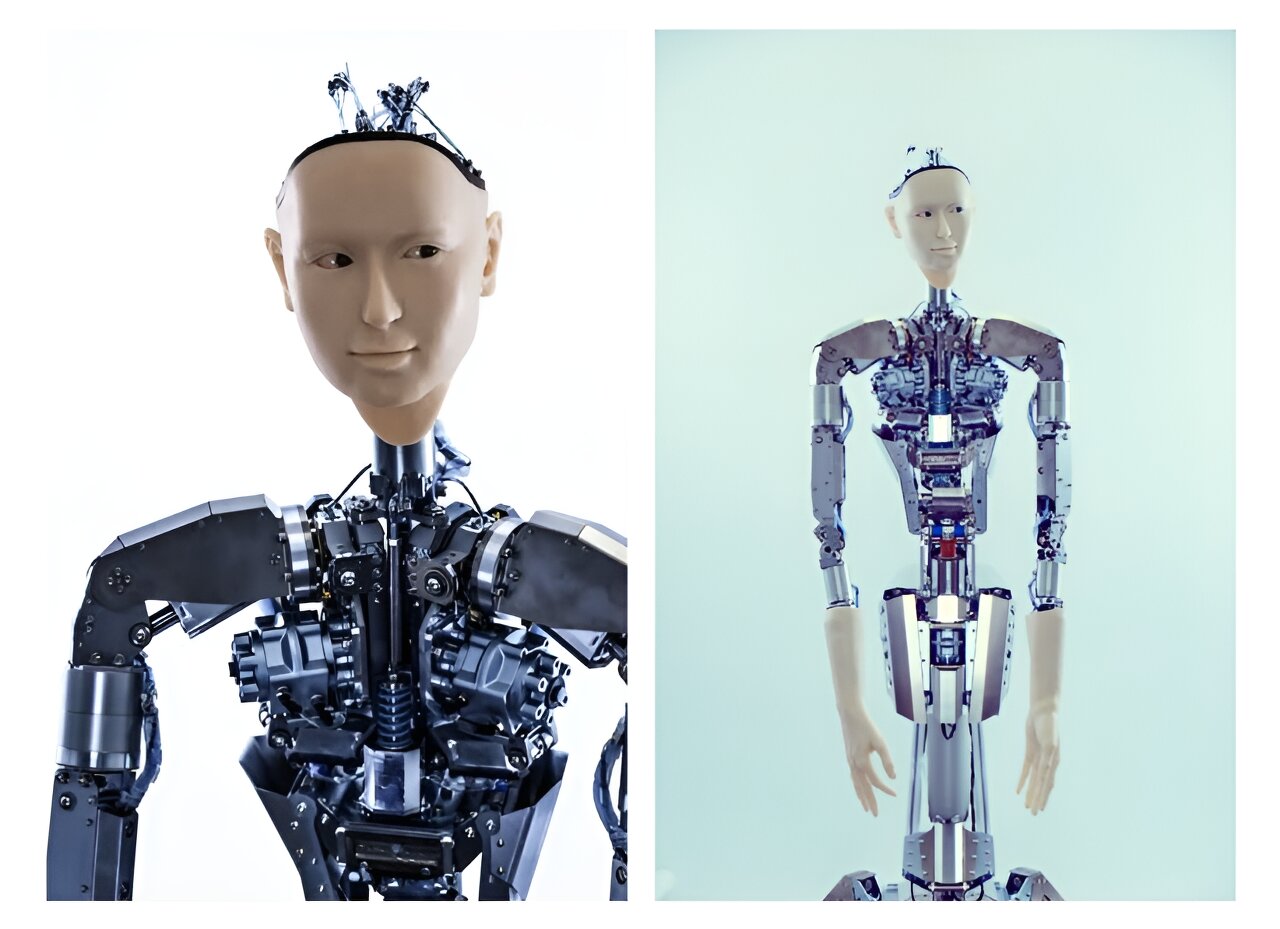

A team of researchers at the University of Tokyo has built a bridge between large language models and robots that promises more humanlike gestures while dispensing with traditional hardware-dependent controls.

You must log in or # to comment.

“Thanks to LLM, we are now free from the iterative labor,” the authors said.

Now, they can simply provide verbal instructions describing the desired movements and deliver a prompt instructing the LLM to create Python code that runs the Android engine.

Alter3 retains activities in memory, and researchers can refine and adjust its actions, leading to faster, smoother, and more accurate movements over time.

This is a really interesting application of LLM